Saliency-based Analysis of Shortcut Learning in CNNs

A printable summary generated from outputs/. Repository: abidali9000/Deep-Learning

1. Abstract

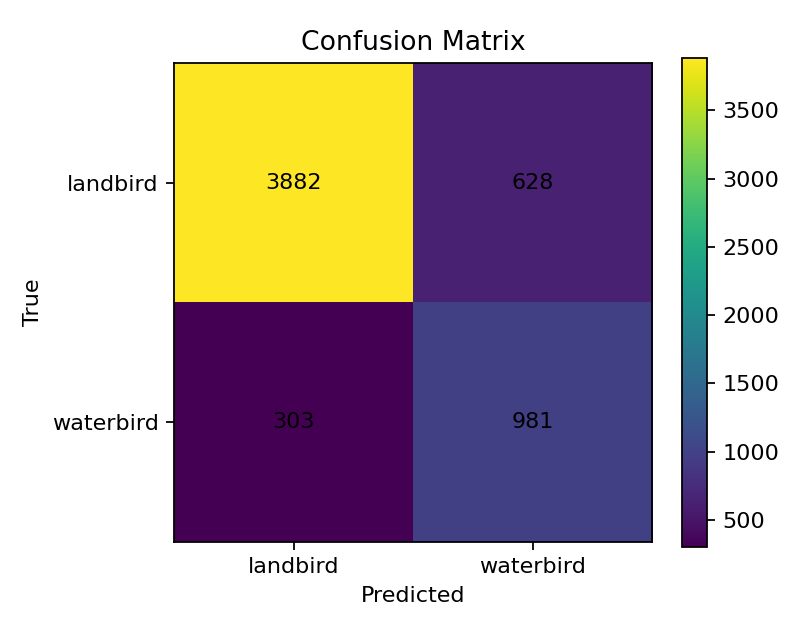

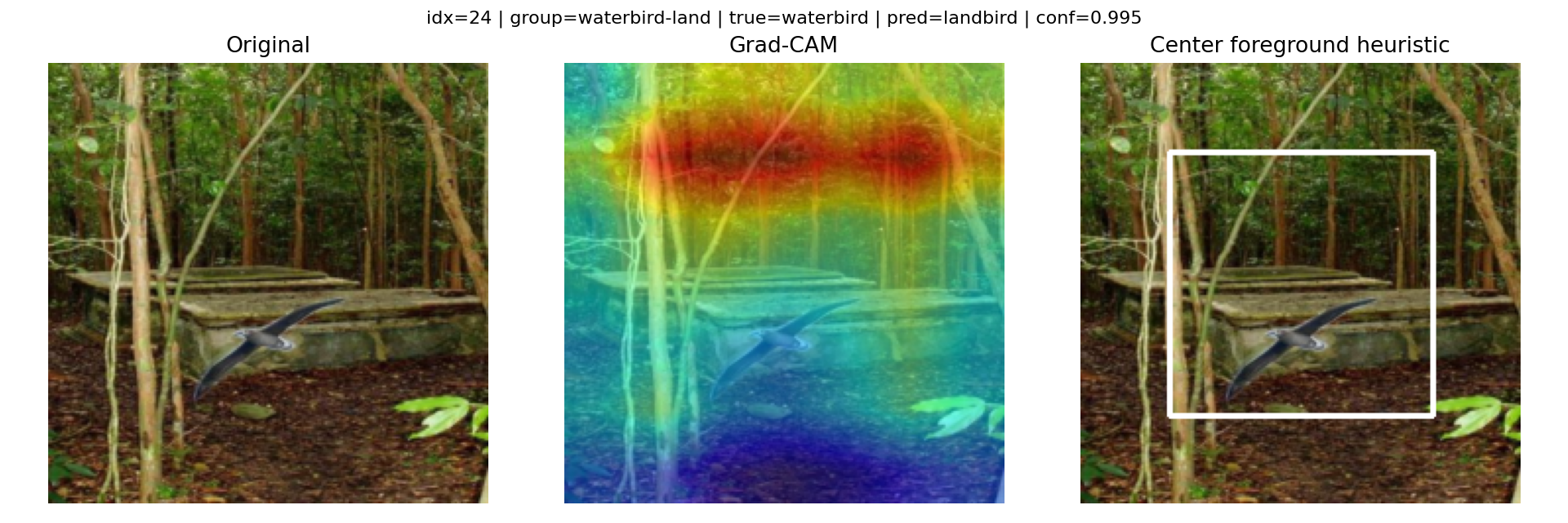

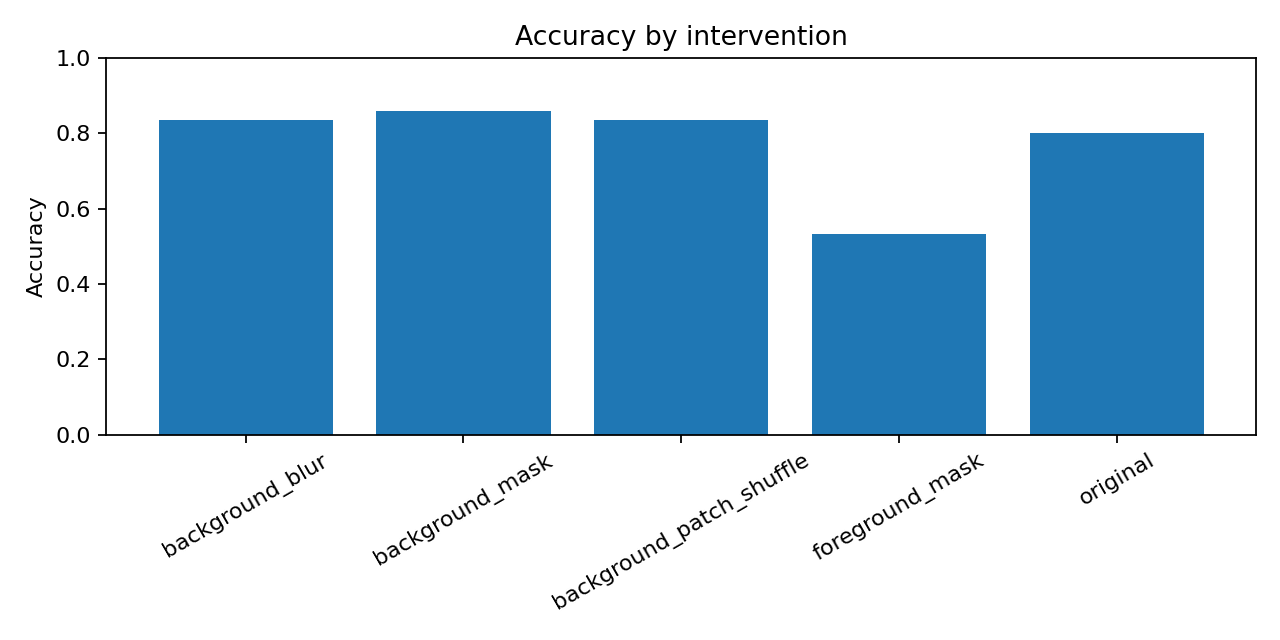

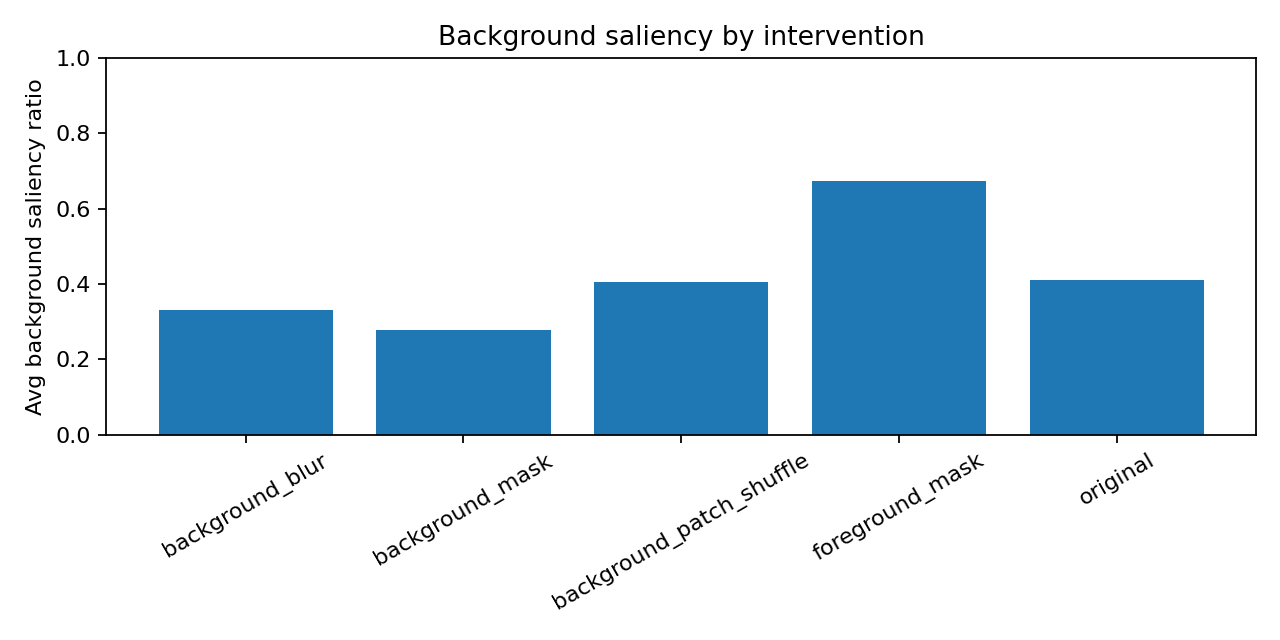

We train a ResNet18 on the Waterbirds dataset, where background and bird type are spuriously correlated, and use Grad-CAM together with a foreground / background attention-bias score to test whether the model relies on background cues. The CNN reaches 83.9% overall accuracy on the balanced test split but only 59.5% on the worst (waterbird-on-land) subgroup. Four inference-time interventions provide direct causal evidence that background cues drive predictions on the conflict subgroups: foreground masking flips 31.8% of predictions, and background masking actually raises overall accuracy to 86.0%. We conclude that the model has partially internalised the shortcut background → bird type.

2. Method

The pipeline is implemented in eight Python files inside src/ (src/train.py, src/evaluate.py, src/gradcam_analysis.py, src/interventions.py, etc.). The full model configuration lives in config.yaml.

- Backbone: ImageNet-pretrained ResNet18, FC replaced with 2 logits.

- Optim: Adam, lr 1e-4, weight-decay 1e-4, batch 32, 15 epochs, 224×224.

- Best checkpoint by validation worst-group accuracy.

- Grad-CAM target layer:

model.layer4[-1], predicted-class target. - Foreground heuristic: 60% center crop. Bias score = bg saliency / total.

- Interventions: background blur (Gaussian k=31), background mask (grey 0.5), background patch shuffle (16×16), foreground mask (grey 0.5).

3. Test classification metrics

- Overall accuracy: 83.9%

- Macro precision: 76.9%

- Macro recall: 81.2%

- Macro F1: 78.6%

- Worst-group accuracy: 59.5%

Subgroup metrics

| Subgroup | Count | Accuracy | Avg confidence |

|---|---|---|---|

| Waterbird on water (majority) | 642 | 93.3% | 96.9% |

| Waterbird on land (conflict) | 642 | 59.5% | 90.1% |

| Landbird on land (majority) | 2255 | 98.6% | 98.4% |

| Landbird on water (conflict) | 2255 | 73.6% | 87.6% |

4. Training history (selected epochs)

| Epoch | Train acc | Val acc | Worst-group acc | WB-land | LB-water |

|---|---|---|---|---|---|

| 1 | 93.7% | 86.0% | 32.3% | 32.3% | 89.5% |

| 3 | 98.7% | 80.2% | 54.1% | 54.1% | 64.2% |

| 5 | 99.1% | 80.7% | 26.3% | 26.3% | 74.9% |

| 7 | 99.5% | 81.2% | 50.4% | 50.4% | 67.6% |

| 9 | 99.7% | 81.8% | 40.6% | 40.6% | 72.5% |

| 11 | 99.7% | 83.6% | 29.3% | 29.3% | 80.9% |

| 13 | 99.8% | 81.6% | 26.3% | 26.3% | 76.8% |

| 15 | 99.2% | 79.0% | 16.5% | 16.5% | 73.6% |

5. Grad-CAM saliency by subgroup

| Subgroup | Foreground | Background (bias) | Sample acc. |

|---|---|---|---|

| Landbird on land (majority) | 51.6% | 48.4% | 93.3% |

| Landbird on water (conflict) | 59.3% | 40.7% | 60.0% |

| Waterbird on land (conflict) | 59.3% | 40.7% | 70.0% |

| Waterbird on water (majority) | 65.2% | 34.8% | 96.7% |

Representative shortcut failures

6. Intervention results

| Condition | Accuracy | Flip rate | Avg BG saliency |

|---|---|---|---|

| Background blur | 83.5% | 7.7% | 33.0% |

| Background mask | 86.0% | 10.4% | 27.8% |

| Background patch shuffle | 83.6% | 12.0% | 40.6% |

| Foreground mask | 53.4% | 31.8% | 67.4% |

| Original | 80.2% | 0.0% | 41.0% |

Per subgroup × condition (selected)

| Condition | Subgroup | Acc | Flip | BG sal. |

|---|---|---|---|---|

| Background blur | Landbird on water (conflict) | 79.1% | 12.8% | 28.7% |

| Background blur | Waterbird on land (conflict) | 53.2% | 11.5% | 35.1% |

| Background mask | Landbird on water (conflict) | 86.2% | 17.8% | 27.7% |

| Background mask | Waterbird on land (conflict) | 59.7% | 12.2% | 26.8% |

| Background patch shuffle | Landbird on water (conflict) | 83.6% | 19.8% | 38.8% |

| Background patch shuffle | Waterbird on land (conflict) | 48.9% | 15.8% | 41.2% |

| Foreground mask | Landbird on water (conflict) | 20.6% | 55.6% | 70.2% |

| Foreground mask | Waterbird on land (conflict) | 7.2% | 47.5% | 66.2% |

| Original | Landbird on water (conflict) | 70.0% | 0.0% | 36.4% |

| Original | Waterbird on land (conflict) | 50.4% | 0.0% | 44.7% |

7. Conclusion

The CNN's overall accuracy hides a 20.7% gap to its worst subgroup. Saliency analysis shows the model's attention drifts onto the background, and inference-time interventions confirm a causal role for the background: foreground-mask collapses accuracy to 53.4% with 31.8% flips, while background-mask raises overall accuracy to 86.0%. This is consistent with shortcut learning: the model uses the bird, but it also relies on the background to a degree that hurts minority-subgroup generalisation.

8. References

- Sagawa et al., 2019. Distributionally Robust Neural Networks for Group Shifts. arXiv:1911.08731.

- Geirhos et al., 2020. Shortcut Learning in Deep Neural Networks. Nature MI.

- Selvaraju et al., 2017. Grad-CAM. ICCV.

- Wah et al., 2011. CUB-200-2011 Dataset.

- Zhou et al., 2017. Places: A 10M Image Database for Scene Recognition.